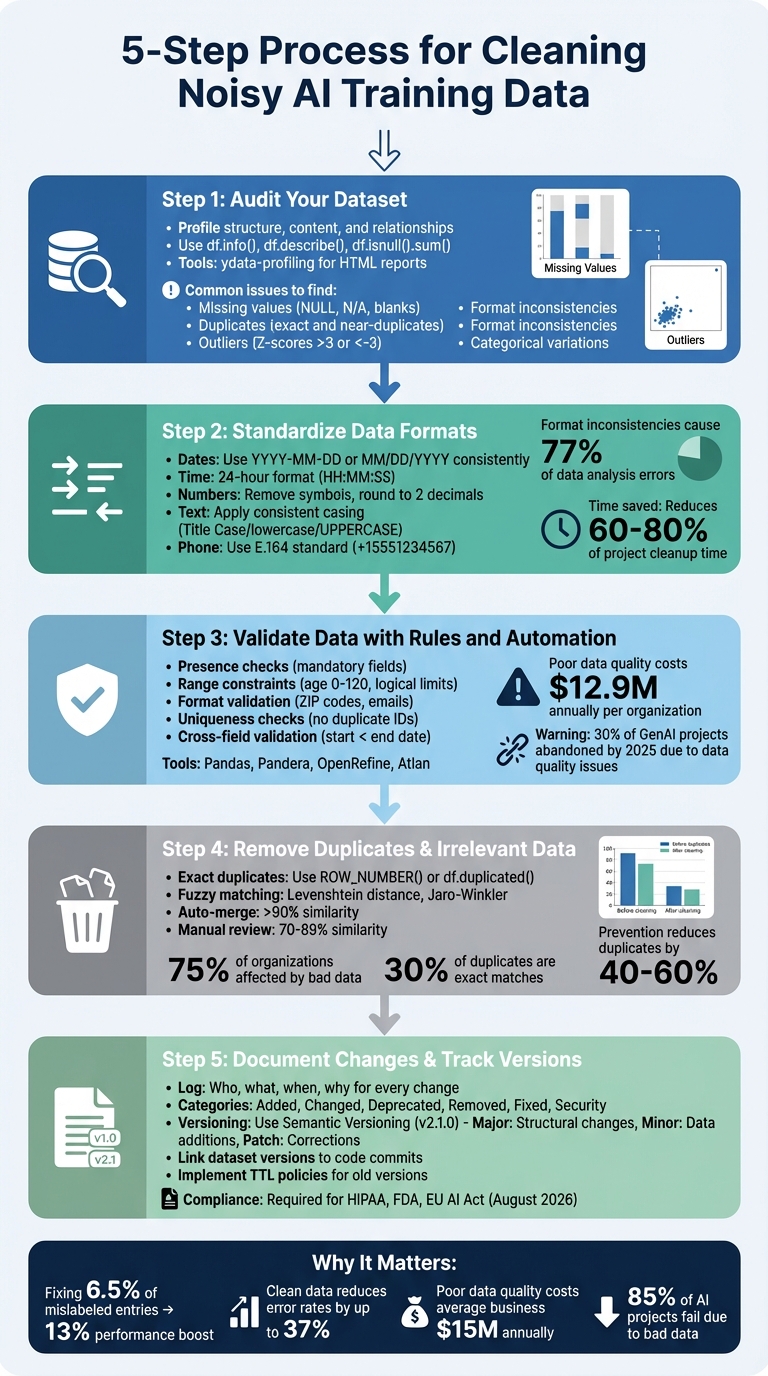

Checklist for Cleaning Noisy AI Training Data

Dirty data ruins AI models. It leads to inaccurate predictions, costly mistakes, and even biased outcomes. Cleaning your training data is a must to ensure your AI performs as intended.

Here’s the 5-step process to clean noisy data:

- Audit Your Dataset: Identify issues like missing values, duplicates, or outliers using tools like Python’s

pandasorydata-profiling. - Standardize Data Formats: Ensure consistency in dates, numbers, and text fields to avoid misinterpretation.

- Validate Data: Apply rules (e.g., range checks, format validation) and use automation tools like Pandera or OpenRefine to catch errors.

- Remove Duplicates & Irrelevant Data: Eliminate repeated entries and outdated or non-contributory records.

- Document Changes & Track Versions: Maintain logs and version control for transparency and compliance.

Why it matters: Clean data improves AI accuracy significantly. For example, fixing just 6.5% of mislabeled entries can boost model performance by 13%. Skipping this step risks costly errors and project failures.

Your takeaway: Always clean, validate, and document your data before training AI systems. It’s the backbone of reliable AI results.

5-Step Process for Cleaning Noisy AI Training Data

Python for AI #2: Exploring and Cleaning Data with Pandas

sbb-itb-3988b8d

Step 1: Audit Your Dataset

Take a close look at your dataset to identify hidden issues that could negatively impact the performance of your AI model.

Profile Your Dataset

Start by thoroughly reviewing your dataset's structure, content, and quality. Focus on three main areas: structure (data types and formats), content (missing values and summary statistics), and relationships (connections between fields).

In Python, commands like df.info(), df.describe(), and df.isnull().sum() can help you quickly evaluate key aspects of your data. These checks address important questions: Are dates formatted correctly? What percentage of each column is missing? Do the minimum and maximum values make sense?.

For a more detailed analysis, tools like ydata-profiling can generate HTML reports that highlight relationships, data skewness, and missing value patterns - all with minimal coding effort.

"The quality of the data is paramount to the performance of AI models. Models trained on noisy data risk making decisions that are not just wrong but potentially harmful".

Once you've profiled your dataset, you can pinpoint specific issues that need attention.

Find Common Data Problems

Using your dataset profile as a guide, identify common issues that could compromise data quality. Here are some typical problems to watch for:

- Missing values: These might show up as blank cells, NULL entries, or "hidden nulls" like "N/A", "0", or "-" that aren't technically null but still represent missing information. These placeholders often require manual identification since standard libraries may overlook them.

- Duplicates: Look for exact duplicates, repeated IDs, or near-duplicates (e.g., "John Smith" vs. "john smith").

- Outliers and range violations: Examples include negative ages, a GPA of 5.0 on a 4.0 scale, or a $50,000 lunch expense. Use methods like Z-scores (above 3 or below -3) or the interquartile range (IQR) to flag these anomalies.

- Formatting inconsistencies: Ensure numbers, dates, and text are consistent across the dataset.

- Categorical variations: Watch for cases where the same category is represented in different ways, like "Elec", "Electronic", and "Electronics." These inconsistencies can inflate unique value counts and disrupt grouping operations. If a "Category" column has thousands of unique values, normalization is likely needed.

Step 2: Standardize Data Formats

Once you've identified the issues in your dataset, the next step is to enforce consistency across all data formats. Format inconsistencies are a major roadblock - causing errors in 77% of data analysis and reporting tasks. On top of that, data scientists often spend 60% to 80% of their project time cleaning up messy datasets. Without standardization, AI models can misinterpret formatting differences as meaningful variations, which leads to flawed insights.

Standardize Date, Time, and Number Formats

Start by converting all date formats to a single standard. For U.S.-based datasets, use MM/DD/YYYY (e.g., 11/24/2025). For international compatibility, switch to YYYY-MM-DD (e.g., 2025-11-24). Always use a 24-hour format (HH:MM:SS) to avoid confusion between AM and PM. If your data spans multiple time zones, convert everything to UTC to ensure consistent comparisons.

For numerical data, strip out symbols like currency signs, commas, and unit labels, and store the values as numeric types. Financial data should typically be rounded to two decimal places for clarity and accuracy. For fields like ZIP codes that may include leading zeros (e.g., 02134 for Boston), format these as text or use padding functions to retain the zeros.

"Garbage in, garbage out." - V7 Labs

By standardizing numerical and temporal formats, you'll create a solid foundation for the next steps in data preparation.

Normalize Text Fields

Text fields can be a minefield of inconsistencies. Use consistent casing for categorical fields: Title Case for names (e.g., John Smith), lowercase for general text normalization, or UPPERCASE if required by industry standards. Eliminate unnecessary spaces - like leading, trailing, or multiple spaces - using trimming functions or regular expressions such as \s+.

For categorical labels, map all variations of a label to a single, standardized form using tools like lookup tables or dictionaries. Remove unnecessary elements like HTML tags, URLs, and special characters that don't add value. Libraries such as BeautifulSoup can help with this cleanup.

"Text cleaning is signal engineering. You are not deleting data. You are clarifying intent." - Adekola Olawale

Phone numbers should follow the E.164 international standard (e.g., +15551234567) to ensure global consistency. When dealing with emojis, decide on a strategy: remove them entirely, replace them with placeholders, or convert them into text representations like :smiling_face: to preserve sentiment data. The key is to make your process deterministic - meaning the same input will always produce the same output. This ensures your AI experiments are reproducible.

Step 3: Validate Data with Rules and Automation

Once your data formats are standardized, the next step is to ensure errors are caught early - before they derail your AI initiatives. Poor data quality costs organizations an average of $12.9 million annually, and it’s estimated that by 2025, about 30% of generative AI projects will be abandoned due to these issues. A structured validation process not only identifies inconsistencies but also enables automated fixes to maintain data integrity.

Apply Validation Rules

Validation rules serve as checkpoints to safeguard your data's accuracy and reliability. These rules can address a variety of issues:

- Presence checks: Ensure mandatory fields, like customer emails or phone numbers, are not left blank. Missing values can distort analytics and lead to biased AI outputs.

- Range constraints: Verify that numerical data stays within logical limits. For instance, ages should fall between 0 and 120, and sensor temperatures might range from -50°F to 150°F.

- Format validation: Use pattern matching to enforce specific formats. U.S. ZIP codes, for example, must be 5 or 9 numeric digits, and email addresses should follow the standard

name@domain.comformat. - Uniqueness checks: Prevent duplicate entries by ensuring key fields like Social Security Numbers or transaction IDs are unique within the dataset.

- Cross-field validation: Confirm that related fields align logically. For example, shipping costs should never exceed the total product value, and a start date must always come before an end date.

"At least 30% of generative AI projects will be abandoned by the end of 2025 because of poor data quality (besides rising costs and inadequate risk controls)." - Rita Sallam, Distinguished VP Analyst, Gartner

Use Automation Tools

For large datasets, manual validation simply isn’t practical. Automation tools streamline the process and make it scalable:

- Pandas (Python): A powerful library for scripting data checks like null value detection, data type validation, and custom rules.

- Pandera: This tool allows you to define validation rules as code, such as range checks or regex patterns, and integrates seamlessly into CI/CD pipelines.

- OpenRefine: Offers a user-friendly, visual interface for cleaning and transforming data without requiring coding skills.

- Enterprise platforms: Tools like Atlan Data Quality Studio work directly within cloud data warehouses like Snowflake and Databricks, efficiently validating billions of rows.

- AI-powered tools: These can generate up to 95% of data quality rules by analyzing historical patterns, drastically cutting down on setup time.

To gain a full picture of your data quality issues, consider implementing lazy validation. This approach collects all errors in one pass, helping you identify and address multiple issues simultaneously.

Step 4: Remove Duplicates and Irrelevant Data

Once you've validated your dataset, it's time to tackle two major sources of noise: duplicate entries and irrelevant data. Studies show that bad data - including duplicates and errors - affects 75% of organizations. In fact, about 30% of duplicates in datasets are exact matches caused by simple mistakes. Building on your earlier efforts in standardization and validation, cleaning up these issues ensures your dataset is ready for solid AI training. This foundation is critical when deploying AI assistants for business growth that rely on high-quality data to drive productivity.

Find and Remove Duplicates

Duplicate records can wreak havoc on your dataset, so removing them requires a strategic approach. Exact duplicates, where entries match perfectly, can be identified with tools like SQL’s ROW_NUMBER() OVER PARTITION or Python Pandas' df.duplicated() function. For larger datasets, hashing fields like email and name can help spot duplicates quickly without relying on slower string comparisons.

Then there’s fuzzy matching, which is essential for catching duplicates with slight variations or typos - think "John Doe" versus "Jon Doe." Techniques like Levenshtein distance, Jaro-Winkler, or vectorization methods (e.g., TF-IDF combined with cosine similarity) are effective here. For efficiency, you might auto-merge matches with over 90% similarity, flag those between 70–89% for manual review, and handle lower scores separately.

Before merging duplicates, establish survivorship rules to decide which record to keep as the "Golden Record." Common rules include prioritizing the most complete record, the most recent one (based on timestamps), or data from a trusted source. Normalizing records beforehand can also boost your match accuracy.

"The cheapest duplicate to fix is the one that never gets created." - William Flaiz, Digital Transformation Executive

Preventing duplicates at the data entry stage can reduce their occurrence by 40–60% before you even run detection tools. Incorporate duplicate checks into your data pipelines using code assertions like assert df.duplicated().sum() == 0 to enforce uniqueness during ingestion. Once duplicates are handled, your focus can shift to filtering out irrelevant records.

Filter Out Irrelevant Records

Irrelevant data, such as outdated entries, logical inconsistencies, or impossible values, needs to be removed or archived. Examples include expired product listings, orders marked as both "shipped" and "pending delivery", or absurd values like a 323-year-old employee or negative inventory numbers.

Every record should align with your project’s goals. For instance, in a model predicting athlete performance, metadata like photo URLs or profile links likely won't contribute to accuracy and can be dropped. Use time-threshold audits to eliminate outdated records. When dealing with statistical outliers - data points with Z-scores above 3 or below -3 - consult domain experts to determine if they represent rare but valid events or errors.

Archive irrelevant data instead of outright deleting it. This preserves historical information for potential audits or future analysis. Always keep a backup of your raw dataset before making sweeping changes, so you can recover any data removed by mistake. After cleaning, sample your dataset to evaluate its quality and ensure no critical data was lost.

Here’s a quick checklist to help you identify and manage irrelevant data:

| Irrelevance Category | Identification Method | Action Recommendation |

|---|---|---|

| Outdated Data | Time-threshold audits | Archive or Delete |

| Invalid Values | Range checks (e.g., Age 0–120) | Remove Record |

| Logical Conflicts | Cross-field validation rules | Flag for Review or Delete |

| Redundant Info | Correlation/Duplicate analysis | Drop Column/Merge |

| Non-Utility Data | Alignment with project KPIs | Drop Column |

"The quality of the data is paramount to the performance of AI models. Models trained on noisy data risk making decisions that are not just wrong but potentially harmful." - Dr. Tom Mitchell, Professor of Machine Learning, Carnegie Mellon University

Step 5: Document Changes and Track Versions

Now that duplicates and irrelevant records are out of the way, it's time to focus on documenting every change to ensure modifications are traceable and reversible. This step is not just about keeping things organized - it’s also crucial for avoiding data loss and meeting regulatory requirements. Industries like healthcare and finance, for instance, must comply with standards such as HIPAA and FDA regulations, where maintaining an audit trail is non-negotiable.

Create a Data Cleaning Log

Keeping a detailed log of your data cleaning activities is essential for maintaining high-quality AI training data. A good data cleaning log should capture the who, what, when, and why of every change. For example:

"Removed 12 records with Age > 120 on 03/15/2026 by J. Smith, approved by M. Chen - v2.1.0."

Here’s what to include in your log:

- The specific component that was changed (e.g., file, formula, or query)

- A clear description of the modification

- The date the change was made

- The person responsible for the change

- The person who approved it

- The version number

- The reason behind the change

To make your logs even more user-friendly, categorize changes. Common categories might include:

- Added: New features or data points introduced

- Changed: Adjustments to existing data

- Deprecated: Features marked for future removal

- Removed: Data or features that were deleted

- Fixed: Corrections for bugs or errors

- Security: Updates to address vulnerabilities

Keep the log human-readable and organized chronologically, with the latest entries at the top. Assign each dataset version a unique entry and focus on documenting changes that impact functionality, fix errors, or improve security. Tools like Google Sheets' "Show edit history" or SQL query logs can make this process easier by automating some aspects of documentation.

Maintain Dataset Versions

Detailed logs are just the beginning - versioning your dataset takes things a step further by creating a reliable history of all modifications. This ensures reproducibility and eliminates guesswork. Use versioned snapshots of your datasets, following a structured numbering system like Semantic Versioning. Here’s how it works:

- Major versions (e.g., v2.0.0): Indicate structural changes.

- Minor versions (e.g., v2.1.0): Reflect data additions.

- Patches (e.g., v2.1.1): Cover corrections or deduplication.

For clarity, adopt standardized naming conventions such as "CustomerData_v2.1.0_03232026", which include the dataset name, version number, and date.

"Taking data versioning as seriously as model versioning has since saved us months of rework." - Mircea Dima, CTO of AlgoCademy

"Debugging that used to take weeks now takes hours because there's zero ambiguity about dataset versions." - Eren Hukumdar, Co-Founder of Entrapeer

To further streamline processes, link specific dataset versions to corresponding code commits and model artifacts. This approach eliminates confusion during debugging and aligns with the EU AI Act, which mandates auditable training data records for high-risk AI systems by August 2026.

Finally, implement "Time to Live" (TTL) policies to automatically clean up outdated dataset versions and prevent storage overload. Always run data cleaning projects separately on training and test datasets to avoid information leakage and ensure unbiased model evaluation.

Conclusion

Key Takeaways

Clean data forms the backbone of any successful AI project. By following the five steps in this checklist, you can transform unreliable datasets into high-quality training material. Start by auditing your dataset to identify issues like data leakage or distribution drift. Then, standardize formats for dates, numbers, and text fields to ensure consistency. Use validation rules and automation to confirm accuracy, eliminate duplicates and irrelevant entries to avoid skewed results, and document every change with detailed logs and version control to maintain transparency.

The impact of clean data is undeniable. For example, correcting just 6.5% of dataset labels can improve model accuracy by 13%. Tools like Cleanlab can reduce error rates by as much as 37% by fixing label errors. Poor data quality is costly - it’s estimated to cause $15 million in annual losses for the average business. Furthermore, up to 85% of AI projects fall short of their goals, often due to bad data. These steps are essential for laying a solid foundation for robust AI performance.

Why Clean Data Matters for AI Solutions

Clean data is critical for ensuring that AI tools like Chat Whisperer function effectively and maintain user trust. Even the most advanced algorithms can’t compensate for poor-quality data. As Hmrishav Bandyopadhyay aptly states:

"Garbage in, garbage out".

Even small amounts of noise in the data can disrupt performance.

For businesses leveraging AI-powered tools like Chat Whisperer, clean data ensures that chatbots deliver accurate, context-aware responses without being bogged down by irrelevant or incorrect information. When your training data is properly standardized and validated, your AI assistant can focus on delivering seamless customer service, personalized interactions, and actionable insights. Investing in data cleaning leads to better accuracy, fewer manual corrections, and, most importantly, increased customer trust in your AI solutions.

Dr. Tom Mitchell, a Professor of Machine Learning at Carnegie Mellon University, emphasizes this point:

"The quality of the data is paramount to the performance of AI models. Models trained on noisy data risk making decisions that are not just wrong but potentially harmful".

Clean data doesn’t just improve metrics - it’s the key to building AI systems that perform reliably in real-world applications.

FAQs

How do I choose between imputing missing values and dropping rows?

Deciding whether to fill in missing values or remove rows entirely depends on the specifics of your dataset and its overall quality.

Imputation, such as using the mean, median, or mode, is a smart choice when only a small portion of the data is missing and you can reasonably estimate the missing values without skewing the results. On the other hand, dropping rows is the better route when a significant amount of data is missing or if the row contains critical errors that could compromise the analysis.

The goal is to strike a balance between keeping as much data as possible and maintaining its quality to ensure your AI model performs accurately.

What’s the safest way to remove outliers without losing rare but valid cases?

To carefully remove outliers while keeping rare but legitimate cases intact, start by visualizing your data. Tools like scatter plots, violin plots, or box plots can help pinpoint potential outliers. Next, use statistical methods such as Z-scores (values beyond ±3 standard deviations) or interquartile range (IQR) analysis to assess the data more precisely. By combining these approaches, you can separate genuine outliers from valid rare instances, allowing for selective removal or adjustment without accidentally discarding valuable information.

How can I prevent data leakage when cleaning training vs. test data?

To safeguard against data leakage, it's crucial to handle training and test datasets separately throughout your workflow. Here's how you can ensure this separation:

- Start with the test dataset: Review and manually verify any issues within the test data. Avoid using automated tools for corrections at this stage, as this could inadvertently introduce biases.

- Clean the training data independently: Perform all preprocessing and cleaning steps on the training dataset without referencing or incorporating information from the test set.

Maintaining this strict separation helps preserve the integrity of your data and prevents contamination, ensuring your model's performance remains unbiased and reliable.