AI in Software Deployment: Error Detection

AI is transforming software deployment by identifying and fixing errors faster than traditional methods. Here's what you need to know:

- Why it Matters: Deployment pipelines are increasingly complex, making manual error detection inefficient and prone to delays. AI tools analyze logs, detect anomalies, and predict issues before they escalate.

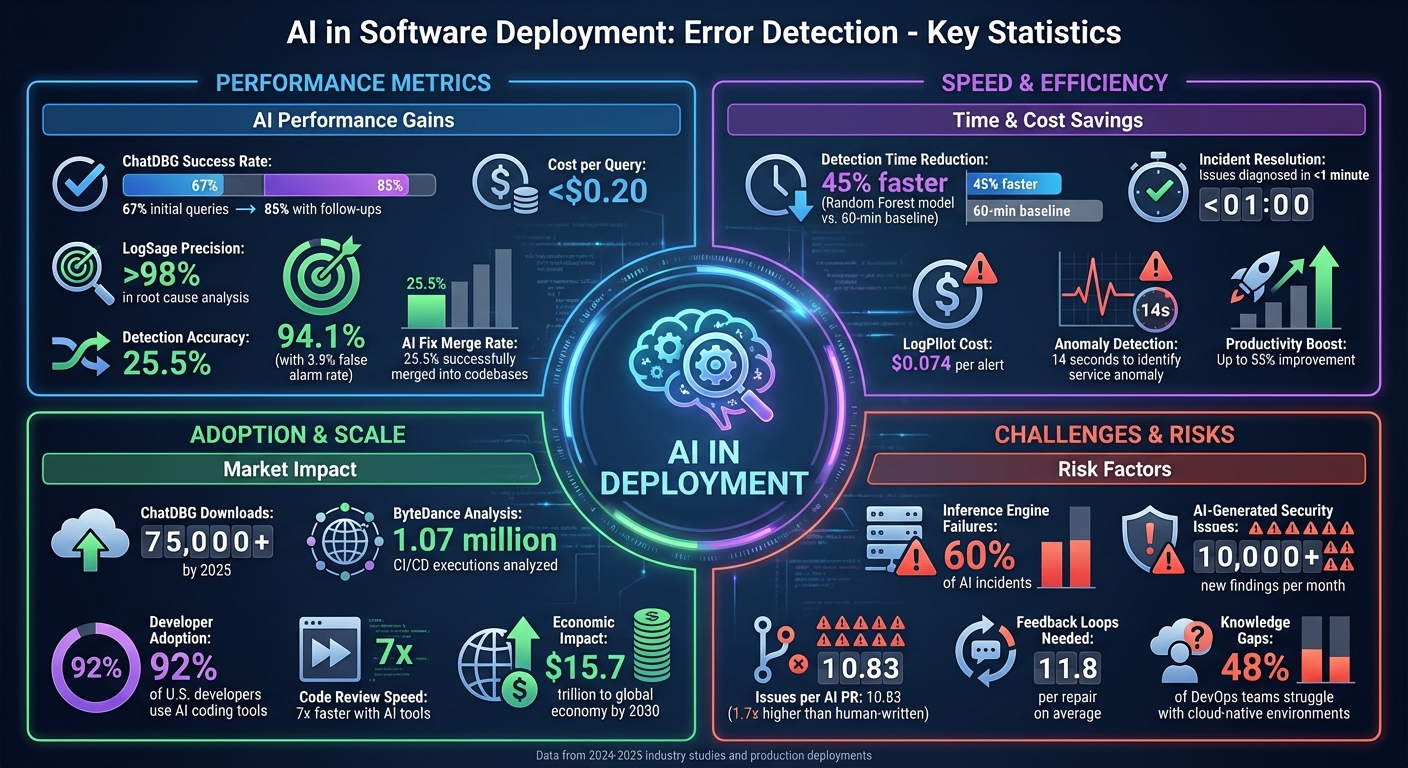

- Key Tools: Systems like LogSage and ChatDBG use machine learning and large language models (LLMs) to streamline error detection. For example, LogSage achieved over 98% precision in identifying root causes, significantly outperforming earlier methods.

- Performance Gains: AI-assisted debugging has shown up to an 85% success rate with follow-up queries, reducing costs to less than $0.20 per query. Automated fixes are becoming more reliable, with 25.5% of AI-generated fixes successfully merged into codebases.

- Challenges: AI introduces risks like false alarms (3.9%), inference failures, and security vulnerabilities in generated code. Governance frameworks and staged autonomy are essential to mitigate these risks.

- Business Impact: AI tools save time and reduce costs, with companies reporting productivity boosts of up to 55%. However, success depends on strong data infrastructure and executive support.

AI is reshaping error detection, but its success hinges on preparation, governance, and the ability to integrate these tools effectively into deployment workflows.

AI Error Detection Performance Metrics and Business Impact in Software Deployment

Research Findings on AI in Deployment Error Detection

Adoption Trends and Metrics

By 2025, a study involving 5,000 tech professionals revealed a striking pattern: AI tends to amplify the dynamics of software development teams. High-performing teams see their strengths magnified, while struggling teams experience their challenges intensified. Derek DeBellis from Google's DORA research team summed it up perfectly:

AI's primary role in software development is that of an amplifier. It magnifies the strengths of high-performing organizations and the dysfunctions of struggling ones.

The use of AI-powered debugging tools has seen a sharp rise. For example, ChatDBG, a debugging assistant powered by AI, surpassed 75,000 downloads by 2025. These tools now incorporate "autonomous reasoning", allowing the AI to independently operate debuggers, execute commands, and analyze program states without constant human input. This shift highlights a significant change in the role of engineers - from manually writing and debugging code to managing AI systems that handle routine debugging tasks. Such trends hint at measurable gains in error detection efficiency.

Performance Improvements in Error Detection

The impact of AI on debugging is notable. ChatDBG, for instance, achieved a 67% success rate on initial queries, which increased to 85% with follow-up queries - a near doubling of the success rate compared to traditional methods. Additionally, AI-assisted debugging comes at a low cost, averaging less than $0.20 per query.

A three-month production study from April to June 2025 showcased further advancements. A 70B-parameter Llama-based model demonstrated a 42.3% solve rate in fixing source code errors based on test failures. While engineers reviewed 80% of AI-generated fixes, 25.5% of the total fixes were successfully merged into the codebase. These results underscore the potential of AI to transform error detection, though challenges remain.

Study Limitations

Despite impressive metrics, there are notable limitations. Many studies focus on short three-month periods to maintain data relevance, potentially missing longer-term trends in system architecture. Additionally, by concentrating on high-severity issues (Severity 2 and 2.5), these studies might overlook less critical but still valuable insights into overall system health.

AI-generated fixes often require refinement. On average, 11.8 feedback loops are needed per repair, with many fixes being only partially accurate and requiring further adjustments. Knowledge gaps also pose challenges - nearly 48% of DevOps teams report difficulties working within cloud-native environments. Even advanced AI systems are not perfect, with a 3.9% false alarm rate, despite achieving 94.1% detection accuracy. These limitations highlight areas where further improvements are needed to fully realize the potential of AI in deployment error detection.

AI Techniques for Deployment Error Detection

Supervised and Unsupervised Learning

AI has taken error detection to a new level by replacing manual rules with machine learning models. Unsupervised methods are often the starting point, analyzing data using statistical thresholds and log deduplication to flag anomalies.

On the other hand, supervised learning takes things further by learning from historical data. For example, in 2025, Datadog's engineering team swapped out traditional statistical checks for a Random Forest model. This model predicts deployment failures based on data collected 10–20 minutes after rollout, cutting detection time by 45% compared to the previous 60-minute baseline. To address the challenge of limited labeled data, weak supervision combines signals like version rollbacks, error signatures, and rule-based checks to create training labels.

LLM-Based Analysis

Large language models (LLMs) are proving invaluable for interpreting the semi-structured and messy logs that traditional methods struggle to process. Between 2024 and 2025, ByteDance introduced LogSage, an LLM-powered framework that analyzed over 1.07 million executions across its CI/CD pipelines. LogSage used a two-stage process: first, it filtered noise by comparing failed logs to successful templates, and then it employed Retrieval-Augmented Generation (RAG) to query internal knowledge bases for potential fixes. This approach achieved over 80% precision.

When applied to a benchmark of 367 GitHub CI/CD failures, LogSage delivered over 98% precision in root cause analysis, offering a 38% F1 score improvement compared to standard LLM prompts. Similarly, Volcano Engine Cloud introduced LogPilot in September 2025. This system improved root cause localization accuracy by 54.79%, diagnosing issues in less than a minute at a cost of $0.074 per alert.

AI Agents in CI/CD Pipelines

AI is no longer limited to detecting errors - it’s now actively influencing deployment decisions. AI agents, often described as "policy-bounded co-pilots", assess risks in real time and decide whether to promote a build, throttle a rollout, or initiate an automated rollback.

Microsoft researchers, for example, tested an LLM agent powered by GPT-4 on the SocialNetwork microservices application in 2025. The agent identified a service anomaly in just 14 seconds and resolved a virtualization-layer misconfiguration in 36 seconds using 10–12 interactions, costing roughly $0.25 per incident. This was made possible by the Agent-Cloud-Interface (ACI) architecture, which enables AI agents to interact directly with cloud environments through documented APIs. Commands like get_logs and exec_shell allow these agents to investigate and fix issues autonomously.

These agents operate much like human Site Reliability Engineers. Specialized agents, with roles such as "Anomaly Sentinel" or "Root Detective", collaborate under an orchestrator, cross-reviewing diagnostic evidence iteratively. This multi-agent approach is essential for managing modern deployments, where nearly 70% of software incidents arise from deployment-related issues. Together, these advancements are transforming error detection and response, paving the way for even greater efficiency in deployment processes.

Risks and Challenges of AI in Deployment

New Failure Modes Introduced by AI

AI is undoubtedly a game-changer for error detection, but it also brings along its own set of unique challenges. A study examining 156 high-severity production incidents in AI inference services revealed that 60% of these issues stemmed from inference engine failures, with timeouts accounting for 40% of those failures. While simpler tasks achieve a success rate of 87.5%, debugging errors in large language models (LLMs) have error rates exceeding 50%.

The root of the problem lies in using probabilistic tools for tasks that demand precision. When AI systems perform multi-step operations - like analyzing logs, generating configurations, and making deployment decisions - a single misstep can snowball into a cascade of failures. In multi-step reasoning tasks, output divergence rates can reach 20% to 30%, meaning the same input could yield different results nearly a third of the time. Even more troubling, AI-generated code can look flawless on the surface but fail catastrophically in production. For instance, Terraform configurations may include convincing but non-existent function names, breaking deployments entirely.

"The hidden risk isn't just in what the system can't do - it's in what it thinks it can do, but gets wrong." - Zichuan Xiong, Thoughtworks

These operational uncertainties inevitably create ripple effects, particularly in the realms of security and privacy.

Security and Privacy Considerations

AI’s rapid integration into deployment pipelines introduces significant security and privacy challenges. As the reliance on AI grows, so does the risk. For example, in just six months leading up to June 2025, AI-generated code led to over 10,000 new security findings per month across analyzed repositories - a tenfold increase. On average, AI-generated pull requests contain 10.83 issues, which is 1.7 times higher than those written by humans. Alarmingly, nearly half of the code snippets generated by five leading LLMs were found to have exploitable bugs.

But it’s not just buggy code that poses a threat. Fine-tuned AI models used for error detection can unintentionally expose sensitive deployment data if their training datasets aren’t properly secured. Another growing concern is "shadow AI", where non-technical teams use AI tools to create scripts without any security oversight, bypassing established review processes entirely. Adding to the complexity, AI tools often generate fewer but much larger pull requests that span dozens of files, making manual reviews nearly impossible.

"AI tools are not designed to exercise judgment. They do not think about privilege escalation paths, secure architectural patterns, or compliance nuances. That is where the risk comes in." - Zahra Timsah, Co-founder and CEO, i-GENTIC AI

Governance Best Practices

To address these risks, organizations must prioritize strong governance. Despite the growing adoption of AI, only a small percentage of executives report having effective governance frameworks in place. This is troubling, especially when 57% of AI leaders admit they don’t fully trust their systems’ outputs, and 60% can’t explain how sensitive data is being used by their AI agents.

A good starting point is establishing a cross-functional "Agent Council." This council should include AI/ML experts, security professionals, risk officers, and business stakeholders to ensure alignment and prevent conflicting standards. Organizations should also implement policy-as-code guardrails within CI/CD pipelines, automating rules like hallucination thresholds or redaction of personally identifiable information (PII).

Staged autonomy is another critical strategy: AI systems should begin as co-pilots with mandatory human intervention for high-stakes decisions, such as rolling back production changes.

"You can't govern what you haven't mapped." - Conor Bronsdon, Head of Developer Awareness, Galileo

Deep observability is key to effective governance. This means capturing not just traditional infrastructure metrics but also the entire "reasoning chain" behind AI decisions. Centralizing all prompts, datasets, and guardrail configurations in version-controlled repositories can enable audit-ready trails. By linking every change to a specific policy ID, organizations can set measurable targets - like maintaining a hallucination rate of ≤ 1% - and trigger automatic alerts or deployment halts if thresholds are breached. Strong governance not only reduces technical risks but also safeguards business continuity.

sbb-itb-3988b8d

Business Impact for U.S. Organizations

Economic Benefits

AI-driven error detection is reshaping financial outcomes for businesses. By 2030, AI is expected to contribute a staggering $15.7 trillion to the global economy, with generative AI alone adding between $2.6 trillion and $4.4 trillion each year. In the first half of 2025, AI investments played a key role in driving over two-thirds of the 1.6% annualized GDP growth.

One major advantage lies in cost savings. Automating routine support tasks and incident triage allows companies to manage IT operations without committing to expensive long-term staffing. Additionally, adopting a "shift-left" strategy - where error testing occurs earlier in the development process - helps avoid the high costs of addressing defects later in deployment. Developer productivity sees a significant boost, with estimates suggesting a 55% improvement when AI tools are used. In fact, 92% of U.S.-based developers already rely on AI coding tools to enhance the quality of their work. Tools like GitHub Copilot can accelerate code reviews by up to 7 times, all while detecting vulnerabilities and style inconsistencies. In industrial applications, frameworks that leverage large language models (LLMs) have demonstrated over 98% precision in identifying root causes of errors.

"With the implementation of AI, I believe the most relevant and unique change will be improvements in the quality of products, given the ability to better analyze, synthesize information, and make recommendations."

- Inbal Shani, Chief Product Officer, Twilio

Organizational Readiness

The ability to harness AI’s potential depends heavily on an organization’s preparedness. Alarmingly, nearly 95% of enterprise AI pilot programs fail to deliver measurable results. The success of AI initiatives often hinges on foundational readiness, starting with data infrastructure. About 45% of organizations cite data accuracy and bias as their biggest challenges to adopting AI. Reliable AI systems require high-quality, integrated data from sources like IoT devices, ERP systems, and legacy platforms.

Executive buy-in is another critical factor. Companies with strong C-level support are 84% more likely to achieve ROI, compared to 75% for those without it. While AI can streamline processes, it cannot fix underlying inefficiencies. Organizations with modern software engineering practices - such as robust CI/CD pipelines and automated testing - see far greater benefits. On the flip side, weak practices can lead to the rapid buildup of technical debt. AI projects can vary widely in cost, from $50,000 for smaller initiatives to over $5 million for large-scale deployments. Custom solutions that leverage proprietary data deliver 2–3 times the ROI of off-the-shelf models.

"Strong quality practices get faster. Weak practices accumulate debt faster."

- Rob Bowley, Product and Technology Consultant, Pragmatic Partners

These readiness factors lay the groundwork for AI chatbots to provide meaningful support in deployment workflows.

How AI Chatbots Support Deployment Teams

AI chatbots, such as Chat Whisperer, are revolutionizing how deployment teams tackle errors. These tools offer real-time assistance throughout the incident lifecycle, enabling engineers to interact with debugging systems in natural language. For instance, a developer could ask, "Why did this assertion fail?" and receive detailed root cause analysis. Advanced assistants can even act autonomously, executing debugger commands, analyzing program states, and navigating call stacks to identify issues.

This real-time support dramatically improves resolution rates. AI-powered debugging assistants provide actionable insights that significantly reduce the time it takes to fix incidents. Chat Whisperer’s customizable platform can be trained on a company’s specific data and policies, ensuring that deployment teams receive guidance that aligns with their unique infrastructure and workflows.

"A powerful aspect of ChatDBG is its ability to exploit real-world knowledge in its analyses... without specific instruction or user intervention."

- Kyla H. Levin et al., ChatDBG Developers

Debugging Production Issues with AI Agents

Conclusion

AI-powered error detection is reshaping how software is deployed. Research highlights that tools like LogSage and TrainCheck excel at identifying errors, even catching silent failures that traditional methods often overlook. These advancements promise both operational improvements and financial benefits.

The numbers tell a clear story. Developers spend roughly 35% of their time on testing. Tools such as Cypress simplify automation by 31% and speed up execution by 21%. Meanwhile, ChatDBG offers fixes at a cost of under $0.20 per query, with an impressive 85% success rate. For U.S. companies, these efficiencies translate into lower costs and quicker product rollouts.

But leveraging these advancements requires preparation. AI thrives in environments with clean, structured data from CI/CD logs, historical incident records, and internal documentation. Without this foundation, organizations may struggle to harness its full potential. In fact, while AI can enhance strong engineering practices, it may exacerbate issues like technical debt in less-prepared teams.

"AI is fundamentally redefining the role of software engineers and developers, moving them from code implementers to orchestrators of technology."

The future lies in pairing AI-driven error detection with supportive tools that align with specific company needs. Platforms like Chat Whisperer, for example, provide real-time, customized assistance tailored to organizational policies and infrastructure. When combined with reliable data, executive backing, and solid engineering practices, AI can transform deployment processes - shifting from reactive problem-solving to proactive error prevention.

FAQs

How does AI help detect errors during software deployment?

AI plays a key role in improving error detection during software deployment by automating the review of log data and spotting potential problems early. With the help of machine learning models, it can predict and highlight possible build or deployment failures, minimizing the chances of downtime or critical errors.

On top of that, AI-powered tools excel at performing root-cause analysis, offering clear and actionable recommendations to fix issues faster. This not only simplifies the deployment process but also boosts reliability, ensuring smoother workflows in real-world CI/CD pipelines.

What challenges come with using AI for detecting errors during software deployment?

AI has the potential to improve error detection during software deployment, but it comes with its own set of challenges. One major factor is the quality of training data. Incomplete logs, inconsistent data formats, or a lack of diverse failure examples can result in missed errors or false positives. On top of that, AI models often struggle to adapt to different environments, making it tricky to apply a single approach across various microservices or cloud platforms.

Another hurdle is integrating AI into existing CI/CD pipelines. This process isn’t straightforward - it demands expertise to handle real-time data processing, manage high computational requirements, and ensure compatibility with tools like version control systems and monitoring platforms. Privacy and compliance concerns further complicate matters, especially when dealing with sensitive information.

Transparency in AI models poses yet another challenge. Many models operate like a "black box", making it hard to pinpoint the root cause of an error. This often requires advanced tools and human oversight to trace issues back to their source. That said, platforms like Chat Whisperer are stepping in with customizable AI solutions designed to tackle these obstacles while meeting enterprise-specific requirements.

How can businesses effectively integrate AI into software deployment workflows?

To weave AI effectively into software deployment workflows, businesses should first pinpoint recurring challenges like build failures, test regressions, or runtime errors. By leveraging AI models trained on historical data, teams can spot early warning signs and even automate responses - such as initiating rollbacks or adaptive deployments - helping to reduce risks and enhance overall efficiency.

A gradual rollout is key to a successful implementation. Start with pilot projects to test the accuracy of AI models, fine-tune data pipelines, and closely observe results. Integrate AI tools with your existing DevOps systems, but ensure a human-in-the-loop remains involved for critical decisions. Incorporating strategies like version-controlled prompts, synthetic test cases, and feedback loops will help the AI adapt alongside your evolving codebase.

Embracing practices like automated test generation and anomaly detection allows businesses to unlock the full potential of AI. These techniques not only reduce risks but also pave the way for faster, safer, and more dependable software deployments.