How AI Chatbots Ensure Regulatory Compliance

AI chatbots are reshaping how businesses handle regulatory compliance, especially in industries like healthcare, finance, and ecommerce. However, without proper safeguards, they can become liabilities, leading to fines and reputational damage. For example, 28% of AI-related fines stem from chatbot misconfigurations, and compliance gaps affect 73% of companies using these tools.

Key takeaways:

- Compliance Risks: Chatbots must meet regulations like GDPR, HIPAA, and CCPA to avoid penalties.

- Real-World Examples: In 2023, a financial firm faced audits after its chatbot violated SEC rules.

- Automation Benefits: Properly designed chatbots can reduce compliance risks by up to 70%.

- Emerging Laws: California's SB 243 now mandates AI disclosure during extended interactions.

To ensure compliance, businesses need chatbots with built-in safeguards, automated monitoring, and training on specific policies. Tools like Chat Whisperer simplify this process with features like policy integration, real-time audits, and automatic updates for changing regulations. Starting at just $5/month, even small businesses can manage compliance effectively.

Compliance Reviews in Minutes, Not Hours: AI Chatbot Build Tutorial

sbb-itb-3988b8d

What Is Regulatory Compliance for AI Chatbots?

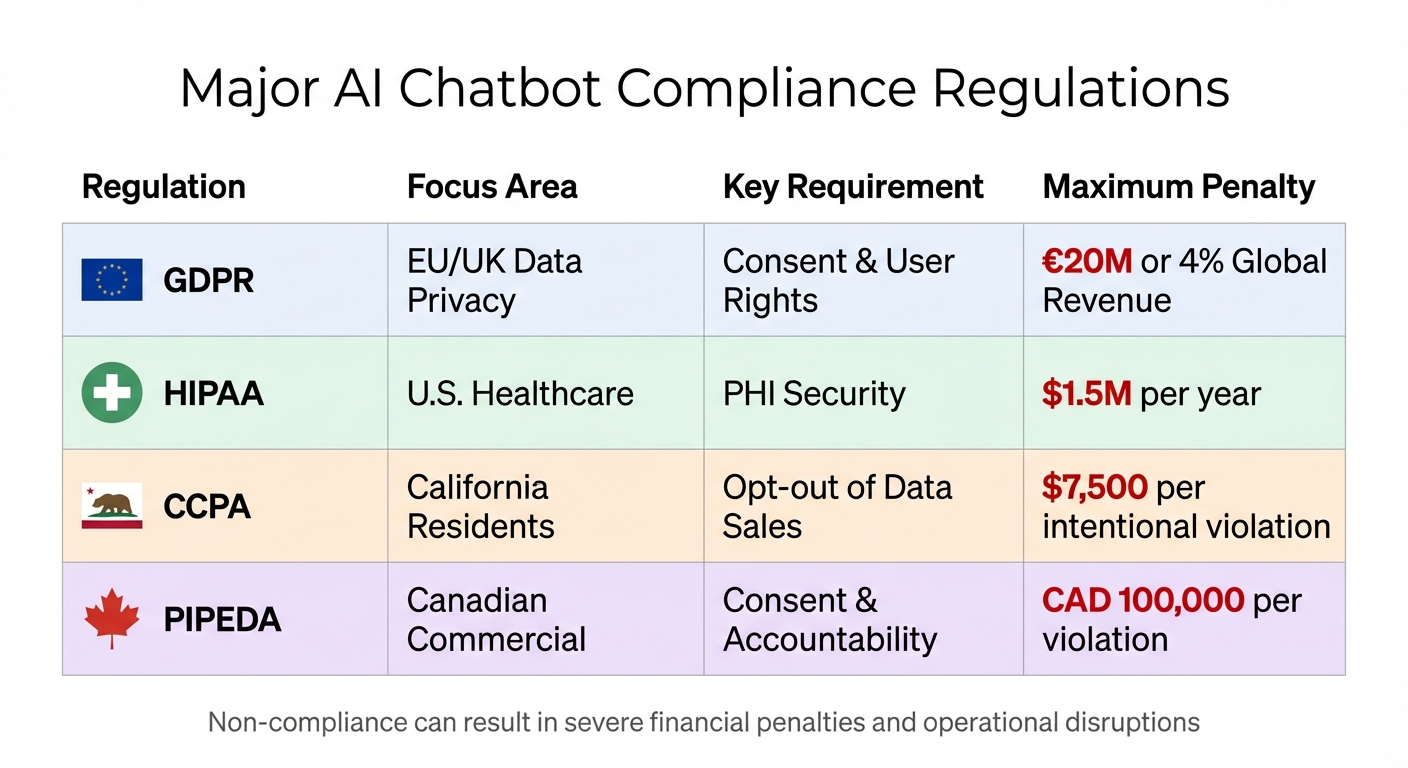

Major AI Chatbot Compliance Regulations Comparison: GDPR, HIPAA, CCPA, and PIPEDA

Regulatory compliance for AI chatbots means adhering to laws that govern how businesses collect, store, and use customer data. These laws focus on data privacy, security, and transparency, shaping how chatbots interact with users and manage their information.

Compliance isn’t optional - it’s critical. Failing to comply can lead to steep penalties: GDPR violations can cost up to €20 million or 4% of global revenue, HIPAA fines range from $100 to $50,000 per incident (capped at $1.5 million annually per violation), and state laws can impose penalties of $2,500 for unintentional and $7,500 for intentional violations. As Maria Prokhorenko of BotsCrew explains:

"Real GDPR compliance isn't just about looking good on the surface - it is about building trust through responsible, transparent, and secure Generative AI and data practices at every level of your organization."

But it’s not just about avoiding fines. Compliance also protects businesses from disruptions. For example, in March 2023, Italy’s Data Protection Authority temporarily banned ChatGPT for GDPR violations, including a lack of transparency around data collection and the absence of a legal basis for processing personal data. OpenAI had to add consent mechanisms and an opt-out option for training data before the ban was lifted. The financial stakes are high too - the average cost of a data breach reached $4.88 million in 2024.

Key Regulations AI Chatbots Must Follow

Three major regulations dominate the compliance landscape for chatbots in the U.S. and globally: GDPR, HIPAA, and CCPA. Each focuses on different aspects of data protection and user rights.

GDPR (General Data Protection Regulation):

This regulation applies to personal data of individuals in the EU and UK. It requires businesses to have a legal basis for data processing, collect only necessary data, and respect user rights such as the "right to be forgotten" and the "right to access." Companies must notify individuals of data breaches within 72 hours, and "Privacy by Design" must be incorporated into systems from the start.

HIPAA (Health Insurance Portability and Accountability Act):

HIPAA governs Protected Health Information (PHI) in U.S. healthcare. Chatbots interacting with patients must use encryption, role-based access controls, and secure vendor agreements (like Business Associate Agreements). Non-compliance has led to multimillion-dollar settlements in some cases.

CCPA/CPRA (California Consumer Privacy Act):

This law gives California residents the right to know what data is being collected about them, delete that data, and opt out of its sale. Chatbots must provide opt-out options, such as a "Do Not Sell My Personal Information" link, and meet requirements for automated decision-making transparency.

In addition to these frameworks, chatbots must also meet disclosure requirements under newer laws. For instance, Utah's AI Policy Act and Maine's Chatbot Disclosure Act require businesses to inform users when they’re interacting with AI rather than a human. Meanwhile, the EU AI Act takes a risk-based approach, categorizing most chatbots as "Limited-Risk" (requiring transparency) but classifying those offering medical or financial advice as "High-Risk", which demands human oversight and conformity assessments.

| Regulation | Focus Area | Key Requirement | Maximum Penalty |

|---|---|---|---|

| GDPR | EU/UK Data Privacy | Consent & User Rights | €20M or 4% Global Revenue |

| HIPAA | U.S. Healthcare | PHI Security | $1.5M per year |

| CCPA | California Residents | Opt-out of Data Sales | $7,500 per intentional violation |

| PIPEDA | Canadian Commercial | Consent & Accountability | CAD 100,000 per violation |

These frameworks create a diverse set of compliance demands across industries.

How Compliance Differs by Industry

The specifics of compliance vary depending on the industry, as each sector has unique data handling requirements.

-

Healthcare Chatbots:

These chatbots must comply with HIPAA’s strict rules for handling patient data, which include encrypting communications, maintaining audit trails, and securing Business Associate Agreements with vendors. In some cases, like under Nevada’s AB 406, further restrictions apply to AI in mental health settings. Healthcare bots providing diagnostic support are often labeled "High-Risk" under the EU AI Act and may require formal assessments before use. -

Financial Services Chatbots:

Financial chatbots face heightened disclosure requirements. Laws like Utah’s AI Policy Act require clear notifications when AI is used in regulated professions, especially in high-stakes interactions. These chatbots must also allow users to opt out of automated profiling that impacts credit decisions and comply with the Gramm-Leach-Bliley Act (GLBA). Courts have emphasized that companies remain accountable for misleading information provided by their chatbots, ruling out the "AI did it" defense. -

Ecommerce Chatbots:

In ecommerce, compliance often revolves around GDPR and CCPA requirements for marketing consent and consumer data tracking. This includes offering clear "Do Not Sell" options and maintaining transparency in data practices. For example, LunaCraft’s chatbot includes a "Minimal Data Mode" that deletes logs after 30 days, achieving GDPR compliance while boosting qualified leads by 38%.

Across all industries, core principles like minimizing data collection, ensuring transparency, and giving users control over their information are essential for meeting compliance standards.

How AI Chatbots Support Compliance

AI chatbots help maintain compliance by integrating safeguards and automating monitoring processes. These tools are designed to protect sensitive data, adapt to shifting regulations, and ensure every interaction adheres to company policies. Aveni.ai describes this role succinctly:

"AI is no longer just a tool for automation - it's a compliance sensor."

Data Privacy and Security Controls

To safeguard data, compliant chatbots employ encryption methods like TLS 1.2/1.3 for transmission and AES-256 for storage. Access to sensitive information is tightly controlled through Role-Based Access Control (RBAC) and Multi-Factor Authentication (MFA), ensuring only authorized personnel can access specific records. Additionally, chatbots mask personally identifiable information (PII) during processing to meet GDPR and HIPAA requirements.

Advanced systems take security further with dual-agent monitoring. One agent manages conversations, while another audits them for compliance risks. This setup can cut policy violations by up to 40% by identifying risks in real time. Every interaction is logged in an immutable audit trail, which includes consent timestamps and model version changes, creating a reliable record for regulatory scrutiny.

These measures not only protect data but also prepare the system to adapt as regulations evolve.

Automatic Updates for Changing Regulations

Keeping up with ever-changing regulations is a challenge, especially when relying on manual updates. Modern chatbots address this by utilizing Retrieval-Augmented Generation (RAG), which allows them to reference versioned policy libraries and ensure their responses align with the latest rules. When new laws are enacted, the system updates its knowledge base automatically, eliminating the need for full retraining.

Some platforms enhance this capability with regulatory change intelligence, which tracks rule updates, maps them to internal controls, and notifies compliance officers with detailed impact analyses. This proactive approach shifts compliance efforts from periodic audits to ongoing monitoring. Acting as a "compliance sensor", the chatbot identifies potential issues, like control drift, and prompts corrective actions before violations occur.

In addition, automated safety evaluations assess every output for risks such as bias, misinformation, or inappropriate content before the user sees it. These evaluations run continuously, flagging problematic content in real time. Interestingly, organizations that review all AI-generated output are 3x more likely to see strong financial benefits from their AI investments. However, 27% of organizations review less than 20% of such content, leaving significant compliance gaps.

While automatic updates are key to staying compliant, tailoring the chatbot to a company’s specific policies adds another layer of precision.

Training Chatbots on Company Policies

Beyond technical safeguards and automatic updates, training chatbots on company-specific policies ensures their responses meet both regulatory and internal standards. Generic chatbots often fall short of addressing industry-specific needs. To bridge this gap, businesses can train their bots using internal documents like employee handbooks, legal databases, and compliance checklists.

Tools such as URL crawlers and data loaders help sync information directly from company intranets or HR portals. Before training, it’s crucial to inventory approved policies and remove outdated documents. For transparency and auditability, chatbots should be configured to cite sources, referencing the exact policy or regulation that supports their responses.

To handle complex cases, implement Human-in-the-Loop (HITL) protocols. These protocols can escalate sensitive issues - such as mental health concerns, discrimination claims, or intricate legal matters - to compliance officers. By setting automatic triggers for such topics, companies maintain accountability while allowing chatbots to efficiently manage routine inquiries.

Transparency and Risk Management with AI Chatbots

When users engage with AI chatbots, they need to know upfront that they're not speaking with a human. This isn't just a courtesy - it's a legal requirement that builds trust and ensures compliance with evolving regulations. For instance, on October 13, 2025, California passed Senate Bill 243, making it the first U.S. law to require "companion" AI chatbots to clearly disclose their non-human nature. The law also requires these disclosures to be repeated every three hours during extended interactions. Such measures lay the groundwork for clear communication practices that foster trust and meet legal standards.

Disclosing AI Interactions to Users

Every interaction with an AI chatbot must start with a clear disclosure. This can take the form of robot icons, bold labels, or spoken introductions, all designed to appear within two seconds of the interaction's start. Transparency is reinforced throughout the conversation with persistent banners or footers. For longer sessions, periodic reminders ensure users remain aware they're talking to an AI.

Beyond visual or verbal cues, companies also include AI disclosure clauses in Master Service Agreements and Terms of Service, ensuring users acknowledge the nature of these interactions. In sensitive scenarios - like discussions about self-harm or mental health crises - chatbots are programmed to redirect users to human-led resources, maintaining transparency while prioritizing safety.

However, disclosure requirements vary by state. For example, Colorado's AI Act mandates disclosures unless it's "obvious to a reasonable person" that the interaction involves AI. In contrast, Utah only requires disclosure if a consumer specifically asks or if the situation is deemed high-risk. Jonathan Tam, a partner at Baker McKenzie, highlights the importance of proactive compliance:

"Waiting for litigation or regulators to dictate priorities is likely to be more costly and more disruptive than building compliance and governance into chatbot design from the outset."

These practices not only satisfy legal demands but also align with broader risk management strategies.

Testing and Risk Management Practices

Clear disclosures are just the beginning - ongoing testing is essential for identifying and addressing emerging risks. Frameworks like the NIST AI Risk Management Framework guide organizations in tackling challenges such as algorithmic bias and performance issues throughout the AI lifecycle. Some companies implement dual-agent systems: one agent manages the conversation while another works in the background, monitoring for policy violations, sentiment changes, and compliance concerns in real time.

Automated safety evaluations further enhance risk management by scoring chatbot responses for potential issues like bias, misinformation, or harmful content before they reach the user. Companies that review all AI-generated outputs are three times more likely to achieve strong financial results from their AI initiatives. Despite this, 27% of organizations review less than 20% of their AI content, leaving critical gaps in oversight. Maintaining detailed logs of every interaction adds another layer of accountability, creating a defensible record for regulatory inquiries.

A cautionary tale comes from the Federal Trade Commission's settlement with DoNotPay, Inc. in September 2024. The company had marketed its AI chatbot as the "world's first robot lawyer", but the FTC found its claims misleading because the bot's outputs weren't validated by licensed professionals. This case highlights why accurate disclosures and rigorous oversight are non-negotiable for companies deploying AI chatbots.

How Chat Whisperer Helps with Compliance Automation

Chat Whisperer is designed to address the growing need for seamless compliance by embedding essential regulatory safeguards directly into its platform. This tool helps businesses automate compliance workflows while maintaining strict oversight of customer interactions. From ecommerce to healthcare, where precision in meeting regulatory requirements is non-negotiable, Chat Whisperer supports industries with its adaptable features.

Compliance Features in Chat Whisperer

With Chat Whisperer, businesses can train AI agents using their own company documentation, such as FAQs, internal policies, and specific business rules. The platform’s intuitive no-code interface lets users upload documents and set visual rules, simplifying compliance management. It also integrates with CRMs and other tools, automating tasks like email follow-ups to ensure compliance is maintained across all customer interactions. Additionally, users can adjust the chatbot's tone to align with their brand and set triggers to escalate sensitive issues to human oversight. According to data, a well-trained chatbot can handle up to 70% of customer queries, allowing human teams to focus on more complex, compliance-heavy cases.

This platform is particularly valuable for companies lacking strong compliance controls - an issue affecting 73% of businesses. It ensures robust data protection and adherence to regulatory standards. By providing business-specific training within a secure framework, Chat Whisperer reduces risk while enhancing operational efficiency.

Chat Whisperer Pricing Plans

Chat Whisperer offers three pricing tiers, each tailored to meet different business needs while providing core compliance features:

| Plan Name | Price | Key Compliance Features | Word Limit |

|---|---|---|---|

| Pay Per Use | $5/month | Data loaders, URL crawler for policy training | 3,750 words/month |

| Starter | $20/month | Unlimited team members, custom domain | 30,000 words/month |

| Add-on | $50/month | Multi-model support (Claude, ChatGPT) | 75,000 words/month |

Every plan includes tools like data loaders for PDF, Word, and CSV files, as well as a URL crawler that can directly pull policy documents from your website. The inclusion of unlimited team members across all tiers ensures that compliance, legal, and customer service teams can collaborate efficiently on chatbot training and oversight without incurring extra costs.

Conclusion

AI chatbots have grown far beyond their initial role as customer service tools. Today, they serve as powerful compliance monitors, capable of validating and enforcing regulatory standards in real time. By automating audit trails, embedding industry-specific safeguards, and identifying high-risk interactions before they escalate, these systems can reduce audit preparation time by an impressive 60%. Even better, they allow businesses to expand across multiple jurisdictions without increasing compliance costs.

The financial advantages are equally compelling. Dual-agent AI systems have been shown to cut policy violations by 40% in just 90 days. With a 73% compliance gap still lingering among companies using AI chatbots, platforms that incorporate features like fact validation, immutable logging, and automatic escalation protocols are closing that gap. These tools maintain the speed and scalability businesses need while ensuring compliance.

Chat Whisperer exemplifies this evolution by seamlessly combining AI training with robust compliance measures. Unlike generic chatbots, which struggle to handle complex regulations like HIPAA, GDPR, or SOC 2 without heavy manual intervention, Chat Whisperer empowers businesses to take control. It lets them train the AI on their specific policies, create rules through an easy-to-use no-code interface, and integrate with existing CRMs. Compliance becomes a built-in feature of every interaction, not an afterthought. And with pricing starting at just $5/month, even small businesses can access enterprise-level compliance solutions.

"The most effective compliance strategies are those baked into the AI system from the start." – Lexology, Legal AI Compliance Alert

As global discussions around AI regulation doubled between 2022 and 2023, it’s clear that investing in purpose-built compliance automation isn’t just smart - it’s necessary for managing risk and achieving sustainable growth. These advancements underscore the importance of a compliance-by-design approach for modern businesses.

FAQs

What should a compliant chatbot log in an audit trail?

A chatbot designed for compliance must keep detailed logs of critical activities. This includes recording user and system interactions, noting timestamps, tracking data that has been accessed or changed, identifying users, and storing consent records. These logs are crucial for ensuring transparency and accountability, which play a key role in adhering to compliance requirements.

How do I keep a chatbot compliant as laws change?

To keep your chatbot in line with changing laws, it's crucial to have continuous compliance practices in place. Regularly review and adjust its operations to meet regulations such as GDPR, CCPA, and various state-specific laws. Focus on key actions like conducting routine compliance audits, maintaining transparency with users, safeguarding data privacy, and staying up-to-date on legal changes. Additionally, leveraging AI tools with compliance safeguards and involving human oversight can reduce risks and help prevent policy breaches.

When should a chatbot escalate to a human for compliance?

When a chatbot encounters issues that are too complex, sensitive, or tied to compliance, it should hand things over to a human. This might happen with unclear policy questions, possible regulatory breaches, or cases where human judgment is essential to uphold legal and regulatory standards. Escalating these situations ensures the response is accurate and meets necessary compliance requirements.